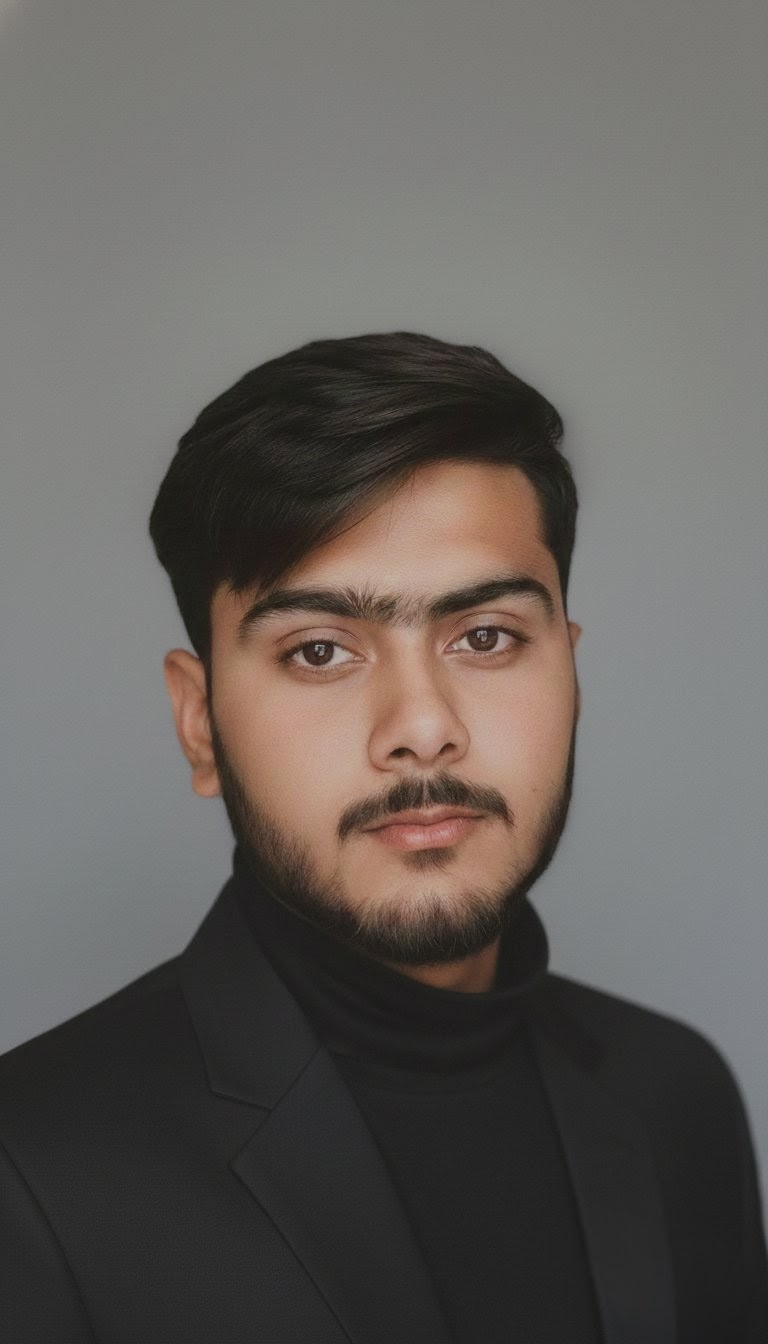

Author: Muhammad Haider

> LLM Systems Engineer · Local AI Specialist · Founder, Decodes Future

About Me

My name is Muhammad Haider, and I am the founder of Decodes Future — a focused engineering lab for Mastering Large Language Models.

I am an LLM systems engineer with over 5 years of hands-on experience building and deploying AI systems. My focus is the practical, deterministic engineering of LLMs — not the hype cycle.

Every guide, benchmark, and architectural pattern on this site is personally tested and validated by me on real hardware. I specialize in local AI deployment, model quantization (GGUF, AWQ, GPTQ), open-source model optimization, and production RAG pipelines.

I bridge the gap between high-level AI research and the local hardware execution that ensures data sovereignty, cost efficiency, and real performance. I have run hundreds of inference experiments across Llama, Mistral, Qwen, and DeepSeek model families using tools like Llama.cpp, Ollama, and vLLM.

I do not teach what I have not built. If it is on this site, it is a blueprint I have personally executed and stress-tested.

[EXPERTISE] Local LLM Deployment · Quantization · RAG Engineering · Prompt Systems · Fine-Tuning (LoRA/QLoRA)

Experience & Credentials

Primary Tech Stack

Why the Mastering LLMs Lab Exists

The internet is flooded with AI hype, but thin on engineering rigor.

Most sites publish "top 10 ChatGPT hacks" or mirror the same surface-level press releases. Developers, founders, and systems builders need something deeper: the actual patterns for building reliable, local, and cost-effective AI systems.

Master the systems behind the models — specialized for local and production-ready applications.

Large Language Models are already redefining the software stack. You do not need a PhD to build with them, but you do need an engineering mindset to deploy them effectively. That is what the Mastering LLMs Lab provides.

Field Operations

Specialization Areas

- Local LLM Infrastructure (Llama.cpp, Ollama, vLLM)

- Quantization Pipelines (GGUF, AWQ, GPTQ)

- Production RAG Systems (ChromaDB, LlamaIndex)

- Fine-Tuning (LoRA, QLoRA, Unsloth)

- Structured Output & Prompt Engineering

- Cross-Model Benchmarking & Evaluation

Lab Status

// UPTIME: 99.9%

// CONTENT_POLICY: Hands-on tested only

// TOPIC_SCOPE: LLM Engineering exclusively

// DATA_SOVEREIGNTY: Executed locally

Lab Principles

At DecodesFuture, we prioritize architectural sovereignty and technical clarity. Every resource in our lab is designed to empower developers to build AI systems that are private, efficient, and fully under human creative control. We believe that the future of software engineering lies in the mastery of Large Language Models as a fundamental layer of the modern stack.

Technical Rigor

We maintain a standard of excellence by testing every blueprint and model benchmark in local production environments. Our goal is to provide actionable intelligence that moves beyond the surface level, focusing on the specific engineering patterns that allow AI practitioners to transition from theory to scalable system execution.